In April 2025, a French consulting firm noticed something unexpected: a competitor that had never appeared in their keyword set was now being cited in ChatGPT responses to their core business queries. Traffic analysis confirmed it, that competitor was receiving 18% of its organic traffic from AI-generated sources while ranking on page 2 of Google for the same keywords.

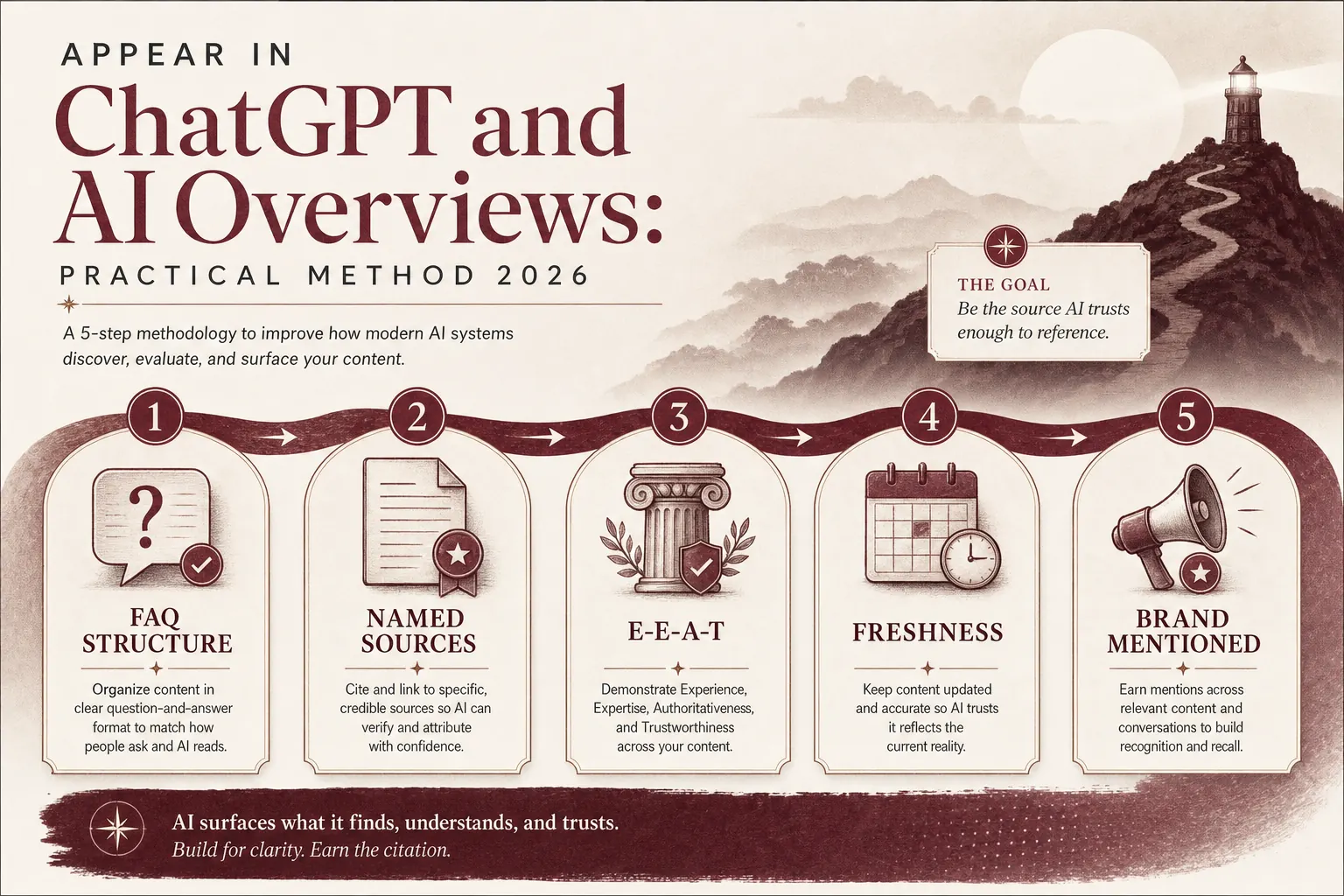

This scenario is no longer exceptional. In 2026, the question is no longer « should I optimize for AI? » but « how do I do it correctly before my competitors do? » This guide gives you the 7-step method. The one we apply at Cicéro for every client content piece.

What is GEO and why does it matter in 2026?

The critical asymmetry: zero-click AI answers hurt unoptimized sites while rewarding optimized ones. When a Google AI Overview cites your brand 3 times in a response, you get brand authority even without the click. When it cites your competitor instead, you get neither the click nor the brand impression.

GEO is not a replacement for SEO, it's the next layer. Without solid technical foundations and domain authority, GEO optimizations have limited effect. The two disciplines are cumulative.

How do ChatGPT and Google AIO select sources?

The two systems are fundamentally different in how they work, but share common selection criteria:

- Answer precision: a clear, verifiable answer immediately after the question beats a long paragraph that buries the answer

- Named sources: « According to Google's Search Quality Evaluator Guidelines (2024) » ranks higher than « according to experts »

- Semantic structure: FAQ schemas, clear headings, consistent vocabulary around a topic

- Topical authority: 10 linked articles on a topic outperform 1 isolated article for the same topic

- E-E-A-T: identifiable author with declared expertise, external mentions, verifiable reviews

One nuance for Google AIO specifically: Google tends to favor pages that already appear in the top 10 organic results for the query. GEO therefore requires maintaining a solid classical SEO foundation.

Not sure if your content already appears in AI answers? Our free diagnostic tests your 20 most important queries in ChatGPT and Google AIO.

Get free diagnosticStep 1, Audit your current AI visibility

How to do this audit

Open ChatGPT (GPT-4 or later) and Google (in a private window to avoid personalization). For each target query:

- Type the query as a user would (question form: « how to... », « what is the best... », « which solution for... »)

- Note whether your domain/brand appears in the response

- Note the 3-5 sites/brands that ARE cited

- Screenshot for your baseline

Priority queries to test: your top 10 GSC keywords + 5 high-intent commercial queries + 5 brand/niche definition queries (« what is [your service] », « how to [your core use case] »).

Important: ChatGPT's knowledge cutoff means it won't cite content published after its last training update unless you're using web-browsing mode. Focus your ChatGPT audit on whether your brand/site is known and how it's described. Not just whether specific recent articles appear.

Step 2, Add direct-answer blocks after each H2

Here is the before/after of the same section, optimized for AI extraction:

"Internal linking is an important aspect of SEO that many site owners neglect. In this section we will explore why it matters and how to approach it properly with a strategic mindset..."

"Internal linking is the practice of linking pages of the same site to each other using relevant anchor text. It serves three functions: distributing PageRank to priority pages, signaling topic structure to Google, and guiding users toward conversion."

The after version is self-contained: it answers « what is internal linking » and « why does it matter » in two sentences. An AI can extract it verbatim and attribute it to your site.

Implementation rule

For every H2 in your existing articles, apply this formula: [Concept] is [definition]. It [does/serves/enables] [function/benefit/application]. Then expand with detail. Never bury the answer in the third paragraph.

Step 3, Implement FAQPage schema

The correct implementation, inline in the <head>:

Three rules for effective FAQ entries:

- Each answer must be complete without the question, a reader seeing only the answer text should fully understand the response

- Avoid yes/no answers, always explain: « Yes, because... » or « No, because... »

- Match exact user queries, use People Also Ask data and autocomplete to identify the real phrasing users type

After implementation, validate at search.google.com/test/rich-results. A FAQPage that fails the Rich Results Test is not read by Google's AI systems.

Step 4, Structure your definitions

Examples of the format applied:

"Core Web Vitals are metrics that Google uses to measure the experience of users on websites."

"Core Web Vitals are three Google performance metrics (LCP, INP, CLS) that measure loading speed, interactivity, and visual stability. And directly influence organic search rankings since the 2021 Page Experience update."

The structured version adds: the specific components (LCP, INP, CLS), the three dimensions they measure, and the concrete impact (ranking influence). An AI extracting this definition can answer « what are Core Web Vitals? » fully, making your page a preferred citation candidate.

Step 5, Cite named, verifiable sources

The naming standard

Every statistic, study reference, or factual claim needs a source that follows this format: [Organization], [Year]. Examples:

- "65% of Google searches with AI Overviews result in zero additional clicks, Search Engine Land, 2025" ✓

- "According to Google's official documentation on E-E-A-T (Search Quality Evaluator Guidelines, December 2024)" ✓

- « Studies show that structured content ranks better » ✗

- « Research indicates that most users prefer... » ✗

When you don't have a named source for a claim, either find one before publishing or rewrite the claim as your own observation: « In our analysis of 47 French SME sites audited in Q1 2026, we found that... ». This is still citable because it's a named, specific source (Cicéro, date, sample size).

Step 6, Build thematic coherence across your site

This is the « semantic cocoon » principle applied to GEO: instead of producing isolated articles, you build clusters. One pillar article covers the broad topic in depth. 4-8 spoke articles cover specific sub-topics. All link to each other with contextual anchor text.

Minimum viable cluster

- 1 pillar: 3,500+ words, full topic coverage, FAQPage, HowTo schemas

- 3-4 spokes: 1,500-2,500 words each, specific angles, link back to pillar

- Consistent vocabulary: same terms for the same concepts across all articles

- Internal links: pillar links to all spokes, each spoke links to at least 2 others + pillar

Need help building your content clusters? Our SEO + GEO audit maps your existing content and identifies the highest-priority clusters to build first.

Free audit consultationStep 7, Strengthen E-E-A-T signals

Priority signals for 2026

1. Author identification. Every article needs a named author (not « the editorial team ») with a Person schema in JSON-LD. Include: name, job title, LinkedIn URL, @id pointing to an author page. The author page itself should list their credentials, published work, and external mentions.

2. External brand mentions. When third-party sites, trade press, industry directories, partner sites. Mention your brand by name, AI systems interpret this as an authority signal. Actively seek press mentions, contribute guest articles to established publications, get listed in credible industry directories.

3. Third-party reviews. A reference to « 4.7/5 based on 83 reviews on Google » with a verifiable link is stronger than any self-declaration. Platforms like Trustpilot and Google Business Profile are recognized by AI systems as independent sources.

4. Publication date management. Always include datePublished and dateModified in your Article schema. Freshness matters for time-sensitive queries. « best practices 2026 » is much more likely to cite a 2026 article than a 2023 one.

4 pitfalls that block AI citation

AI systems extract the most direct, self-contained answer. If you spend 3 paragraphs contextualizing before getting to the answer, the AI either selects a competitor's page or generates the answer from its training data (without citing you). Fix: apply the direct-answer block rule from Step 2 retroactively to all existing content.

A technically perfect article with no JSON-LD schemas is an invisible article from a structured-data perspective. Google's AI systems rely heavily on schema.org to understand content type and extract specific elements. Fix: FAQPage schema at minimum, Article schema with author and dates, BreadcrumbList for navigation context.

« Many companies » / « several studies » / « experts agree ». These formulations are unfalsifiable, therefore unreliable, therefore uncitable. AI systems are trained to flag vague sourcing. Fix: every claim needs a named actor (company, researcher, institution) and a year.

A single article on a topic, with no internal links to related content, signals a site that hasn't fully developed expertise on that topic. This reduces AI citation probability. Fix: build content clusters (Step 6). Even 2-3 linked articles on a topic measurably increase citation probability for that topic's queries.

Measuring your GEO performance

| Metric | Source | Measurement frequency | Target |

|---|---|---|---|

| AI Overview appearances (manual sampling) | Manual Google search (private window) | Monthly, 20 queries | Cited in ≥30% of tested queries |

| ChatGPT brand mentions | Manual ChatGPT test | Monthly, 10 queries | Correct brand description without competitors |

| Featured Snippet appearances | Google Search Console | Weekly | Growing trend month-over-month |

| FAQPage rich result validation | Rich Results Test | After each new article | 100% pass rate before publication |

| Zero-click query share | GSC impressions vs. clicks | Monthly | Stable or declining (AI Overview coverage helps even with low CTR) |

The limits of current GEO measurement

Be transparent with clients and management about what cannot yet be measured precisely: we cannot track direct AI-to-site referrals the way we track organic Google clicks. Some AI Overviews cite sources without showing them to users. ChatGPT web browsing citation data is not accessible via API. The field is still developing its measurement standards in 2026.

What this guide does not cover

This method focuses on content-level optimizations for ChatGPT and Google AI Overviews. The two dominant AI channels in France and globally in 2026. It does not cover: voice search optimization (distinct set of constraints), image/video AI citation, or technical crawl budget optimization for AI crawlers. These are valid extensions of GEO strategy but require separate treatment.